In a current project I needed criteria based Deprovisioning. If one set of criteria was true the user should be delted from one system, if another criteria was true deleted from another system. Using declarative Provisioning the only way to do this without code in FIM 2010 R2 and MIM 2016 is to use ERE’s. In this case however I would introduce over 2 million ERE objects in order to solve the puzzle. I concluded that was not going to be the best solution. All Provisioning at this customer is made using Declarative rules and Outbound Scoping Filters.

Another codeless option is available from Søren Granfeldt and his Codeless Provisioning Framework available on CodePlex. It was just that in this special case 3:rd party addons would be “hard” to get approved.

So I decided that I would for the first time in years make an MVExtension. Just a warning! Before you start building any code or configuring any Deprovisiong you should read the old but still very valid article written by Carol Wapshere on Account Deprovisioning Scenarios. I decided that the solution descibed by Carol as Deprovision based on attribute change was what I needed.

Let me show you how I solved my problem using about 10 lines of code.

Criteria Logic

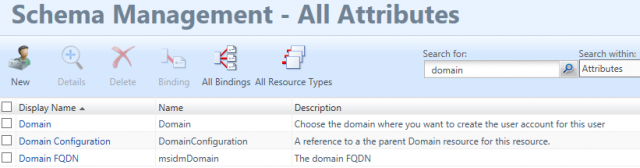

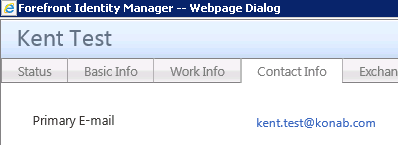

I like my FIM Service and Synchronization Service and together they can solve almost all logical problems I face. In this case I extended the schema with some boolean attributes, one for each MA. Using a combination of Synchronization and FIM Service logic I could then mimic the criteria the customer had defined. Each boolean flag was set to false whenever I wanted the Deprovisioning to happen.

Configuration File

I created a configuration file I could use to make the code in the MVExtension as generic as possible.

Below is an example.

<configuration>

<MA Name="Exchange" Deprovision="true" Flag="flagExchange">

<person Deprovision="true" />

</MA>

<MA Name="AD" Deprovision="true" Flag="flagAD">

<person Deprovision="true" />

<group Deprovision="false" />

</MA>

</configuration>

Each MA can be turned on/off and also each MetaVerse ObjectType can be defined to turn the Deprovisioning on/off. In the configuration I also define the boolean attribute in the MetaVerse that decides if Deprovision should happen or not.

MVExtension.dll

Time to start Visual Studio and create the MVExtension.dll. And forgive me if the code is not optimal, I just don’t write code as often as I did 10 years ago.

I first define the XmlDocument Config as a global variable.

public class MVExtensionObject : IMVSynchronization

{

//Config is a global variable

System.Xml.XmlDocument Config = new System.Xml.XmlDocument();

In the Initialize method I load the configuration file.

void IMVSynchronization.Initialize ()

{

// Read .config file

Config.Load(Utils.ExtensionsDirectory + @"\" + "MVExtension.config");

}

And finally in the Provision method I add the lines to perform the Deprovisioning based on the information in configuration file and the boolean value on the defined attribute.

void IMVSynchronization.Provision (MVEntry mventry)

{

foreach (System.Xml.XmlNode MA in Config.SelectSingleNode("/configuration").SelectNodes("MA"))

{

//Check if ObjectType is in scope or not

if (MA.SelectSingleNode(mventry.ObjectType) != null)

{

string MAName = MA.Attributes.GetNamedItem("Name").Value;

bool MADeprovision = Convert.ToBoolean(MA.Attributes.GetNamedItem("Deprovision").Value);

bool TypeDeprovision = Convert.ToBoolean(MA.SelectSingleNode(mventry.ObjectType).Attributes.GetNamedItem("Deprovision").Value);

string Flag = MA.Attributes.GetNamedItem("Flag").Value;

//We have the information. Let's go!

if (MADeprovision & TypeDeprovision & mventry[Flag].IsPresent)

{

if (mventry.ConnectedMAs[MAName].Connectors.Count > 0 & mventry[Flag].BooleanValue == false)

{mventry.ConnectedMAs[MAName].Connectors.ByIndex[0].Deprovision();}

}

}

}

}

Conclusion

Since Microsoft does not offer a viable way of performing criteria based deprovisioning writing your own MVExtension is sometimes the only logical solution. By using the solution presented here I can now deprovision based on any type of criteria on any type of resource. All I need is to get my Synchronization, FIM Service and maybe Workflows to do it’s job keeping the boolean flags correctly set. In this particular project I actually ended up re-using these flags in the Outbound Scoping filters making them alot less complex then before. So now true = Provision and false = Deprovision.